So if you want to learn about other ways to help your linear model go to the next chapter. However, I will not cover it here since I think GLM’s are better. There are functions that look for the best transformation to use. Other methods such as square rooting the outcome or using some power function (e.g. square, cube) are also quite common. And, you guessed it, this is easily done in R: df $log_var1 <- log(df $var1) Linear-Log is where we adjsut just the predictor variable with a log transformation. This is also easily done: df $log_outcome <- log(df $outcome) Log-Log is where we adjust both the outcome and the predictor variable with a log transformation. This is done easily in R: df $log_outcome <- log(df $outcome) Log-Linear is where we adjust the outcome variable by a natural log transformation. Sounds like a great tongue-twister? Well, it is but it’s also three ways of specifying (i.e. deciding what is in) your model better. stargazer: Well-Formatted Regression and Summary Statistics Tables. # Please cite as: # Hlavac, Marek (2018). For example, stargazer provides: library(stargazer) # Two main packages allow us to compare models:īoth provide simple functions to compare multiple models. We can also compare the models in a well-formatted table that makes many aspects easy to compare. # lm(formula = famsize ~ race + marriage, data = df) fit3 = lm(famsize ~ race + marriage, data=df) For a more interesting comparison, lets run a new model with an additional variable and then make a comparison. Not surprisingly, when we compared the ANOVA and the simple linear model, they are exactly the same in overall model terms (the only difference is in how the cateogrical variable is coded-either effect coding in ANOVA or dummy coding in regression).

The anova() function works with all sorts of modeling schemes and can help in model selection.

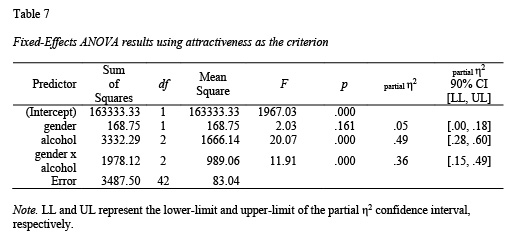

anova(fit, fit2) # Analysis of Variance Table Using the anova() function, we can compare models statistically. # Residual standard error: 1.623 on 4627 degrees of freedom For example, if we run the same model but with the linear regression function lm we get the same ANOVA table. In fact, a linear regression with a continuous outcome and categorical predictor is exactly the same (if we use effect coding). Linear regression is nearly identical to ANOVA. It would appear that “White” and “MexicanAmerican” groups are different in family size. This immediately gives us an idea of where some differences may be occuring. "darkorchid", "firebrick2")) + theme_bw() Values= c( "dodgerblue3", "coral2", "chartreuse4", Ggplot(df, aes( x=race, y=famsize)) + geom_boxplot( aes( color=race)) + scale_color_manual( guide= FALSE, We will be using some of the practice you got in Chapter 3 using ggplot2 for this. We can look at how the groups relate using a box plot. # race 4 541.2 135.300 51.367 F)) is very, very small and thus is quite significant. In the example below, we are analyzing whether family size (although not fully continuous it is still useful for the example) differs by race. To run an ANOVA model, you can simply use the aov function. Since the groups are compared using “effect coding,” the \(\alpha_0\) is the grand mean and each of the group level means are compared to it. It is a family of methods (e.g. ANCOVA, MANOVA) that all share the fact that they compare a continuous dependent variable by a grouping factor variable (and may have multiple outcomes or other covariates). Chapter 10: Where to Go from Here and Common PitfallsĪNOVA stands for analysis of variance.Chapter 9: Reproducible Workflow with RMarkdown.Chapter 3: Exploring Your Data with Tables and Visuals.Select Variables and Filter Observations.Chapter 2: Working with and Cleaning Your Data.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed